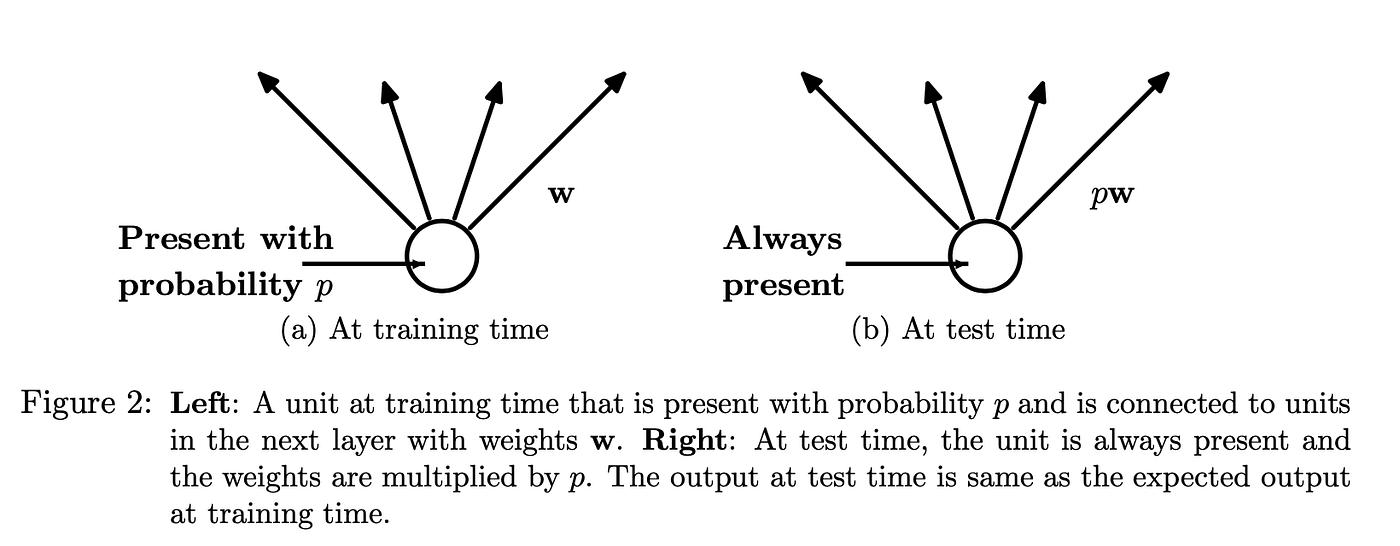

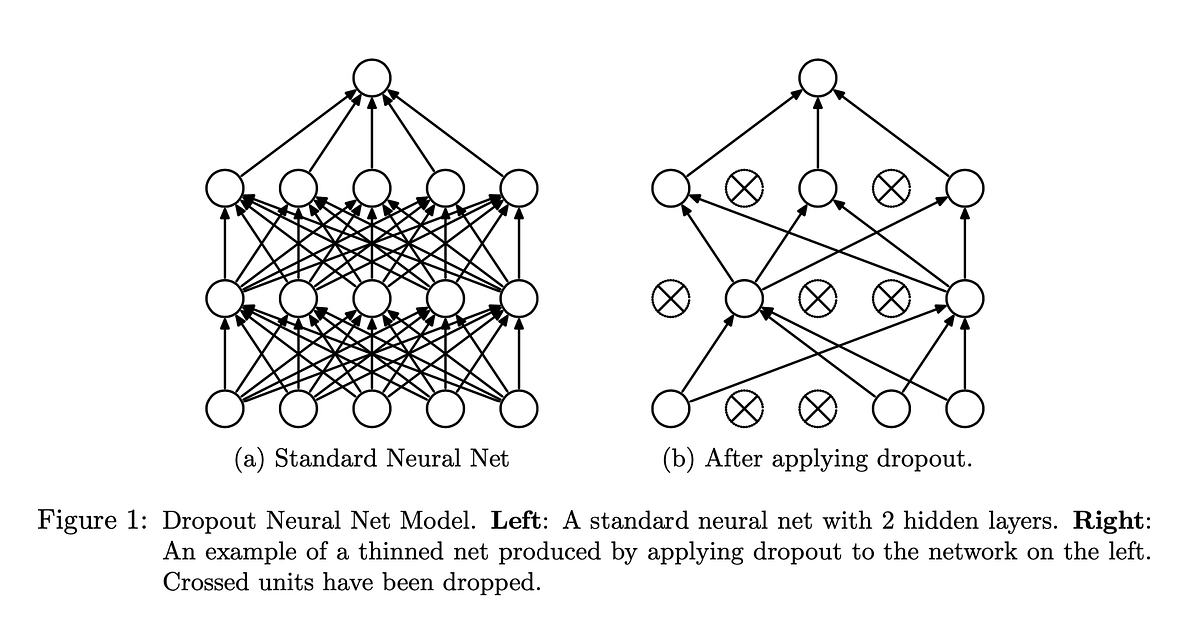

Dropout A Simple Way To Prevent Neural Networks From Overfitting - The key idea is to randomly drop units (along with their connections) from the neural. The key idea is to randomly drop units (along with their connections) from the neural network during training. The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different neural. We describe a method called 'standout' in which a binary belief network is overlaid on a neural network and is used to regularize of its hidden. We show that dropout improves the performance of neural networks on supervised learning tasks in vision, speech recognition,. The paper introduces dropout as a powerful and simple regularization technique that significantly reduces overfitting in deep neural. Dropout is a technique for addressing this problem.

The paper introduces dropout as a powerful and simple regularization technique that significantly reduces overfitting in deep neural. The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different neural. The key idea is to randomly drop units (along with their connections) from the neural network during training. Dropout is a technique for addressing this problem. We describe a method called 'standout' in which a binary belief network is overlaid on a neural network and is used to regularize of its hidden. The key idea is to randomly drop units (along with their connections) from the neural. We show that dropout improves the performance of neural networks on supervised learning tasks in vision, speech recognition,.

We describe a method called 'standout' in which a binary belief network is overlaid on a neural network and is used to regularize of its hidden. The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different neural. We show that dropout improves the performance of neural networks on supervised learning tasks in vision, speech recognition,. Dropout is a technique for addressing this problem. The key idea is to randomly drop units (along with their connections) from the neural. The paper introduces dropout as a powerful and simple regularization technique that significantly reduces overfitting in deep neural. The key idea is to randomly drop units (along with their connections) from the neural network during training.

Table 3 from Dropout a simple way to prevent neural networks from

The key idea is to randomly drop units (along with their connections) from the neural network during training. The paper introduces dropout as a powerful and simple regularization technique that significantly reduces overfitting in deep neural. The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different neural. Dropout is a technique for addressing this.

ML Paper Challenge Day 21 — Dropout A Simple Way to Prevent Neural

We describe a method called 'standout' in which a binary belief network is overlaid on a neural network and is used to regularize of its hidden. We show that dropout improves the performance of neural networks on supervised learning tasks in vision, speech recognition,. The key idea is to randomly drop units (along with their connections) from the neural. Dropout.

[PDF] Dropout a simple way to prevent neural networks from overfitting

The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different neural. We describe a method called 'standout' in which a binary belief network is overlaid on a neural network and is used to regularize of its hidden. The key idea is to randomly drop units (along with their connections) from the neural network during.

GitHub Dropout A Simple Way to

We show that dropout improves the performance of neural networks on supervised learning tasks in vision, speech recognition,. We describe a method called 'standout' in which a binary belief network is overlaid on a neural network and is used to regularize of its hidden. The key idea is to randomly drop units (along with their connections) from the neural network.

Fillable Online Dropout A Simple Way to Prevent Neural Networks from

The key idea is to randomly drop units (along with their connections) from the neural. The paper introduces dropout as a powerful and simple regularization technique that significantly reduces overfitting in deep neural. The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different neural. We show that dropout improves the performance of neural networks.

[PDF] Dropout a simple way to prevent neural networks from overfitting

The key idea is to randomly drop units (along with their connections) from the neural network during training. Dropout is a technique for addressing this problem. We show that dropout improves the performance of neural networks on supervised learning tasks in vision, speech recognition,. The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different.

[PDF] Dropout a simple way to prevent neural networks from overfitting

The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different neural. We show that dropout improves the performance of neural networks on supervised learning tasks in vision, speech recognition,. The key idea is to randomly drop units (along with their connections) from the neural. We describe a method called 'standout' in which a binary.

ML Paper Challenge Day 21 — Dropout A Simple Way to Prevent Neural

The key idea is to randomly drop units (along with their connections) from the neural. We show that dropout improves the performance of neural networks on supervised learning tasks in vision, speech recognition,. Dropout is a technique for addressing this problem. We describe a method called 'standout' in which a binary belief network is overlaid on a neural network and.

[PDF] Dropout a simple way to prevent neural networks from overfitting

The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different neural. The paper introduces dropout as a powerful and simple regularization technique that significantly reduces overfitting in deep neural. We describe a method called 'standout' in which a binary belief network is overlaid on a neural network and is used to regularize of its.

Dropout A Simple Way to Prevent Neural Networks from Overfitting

We describe a method called 'standout' in which a binary belief network is overlaid on a neural network and is used to regularize of its hidden. The paper introduces dropout as a powerful and simple regularization technique that significantly reduces overfitting in deep neural. The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different.

The Paper Introduces Dropout As A Powerful And Simple Regularization Technique That Significantly Reduces Overfitting In Deep Neural.

The key idea is to randomly drop units (along with their connections) from the neural. The authors propose dropout as a way to prevent overfitting and approximately combine exponentially many different neural. The key idea is to randomly drop units (along with their connections) from the neural network during training. Dropout is a technique for addressing this problem.

We Describe A Method Called 'Standout' In Which A Binary Belief Network Is Overlaid On A Neural Network And Is Used To Regularize Of Its Hidden.

We show that dropout improves the performance of neural networks on supervised learning tasks in vision, speech recognition,.

![[PDF] Dropout a simple way to prevent neural networks from overfitting](https://d3i71xaburhd42.cloudfront.net/34f25a8704614163c4095b3ee2fc969b60de4698/11-Figure5-1.png)

![[PDF] Dropout a simple way to prevent neural networks from overfitting](https://figures.semanticscholar.org/34f25a8704614163c4095b3ee2fc969b60de4698/6-Figure3-1.png)

![[PDF] Dropout a simple way to prevent neural networks from overfitting](https://d3i71xaburhd42.cloudfront.net/34f25a8704614163c4095b3ee2fc969b60de4698/8-Table2-1.png)

![[PDF] Dropout a simple way to prevent neural networks from overfitting](https://figures.semanticscholar.org/34f25a8704614163c4095b3ee2fc969b60de4698/20-Figure12-1.png)