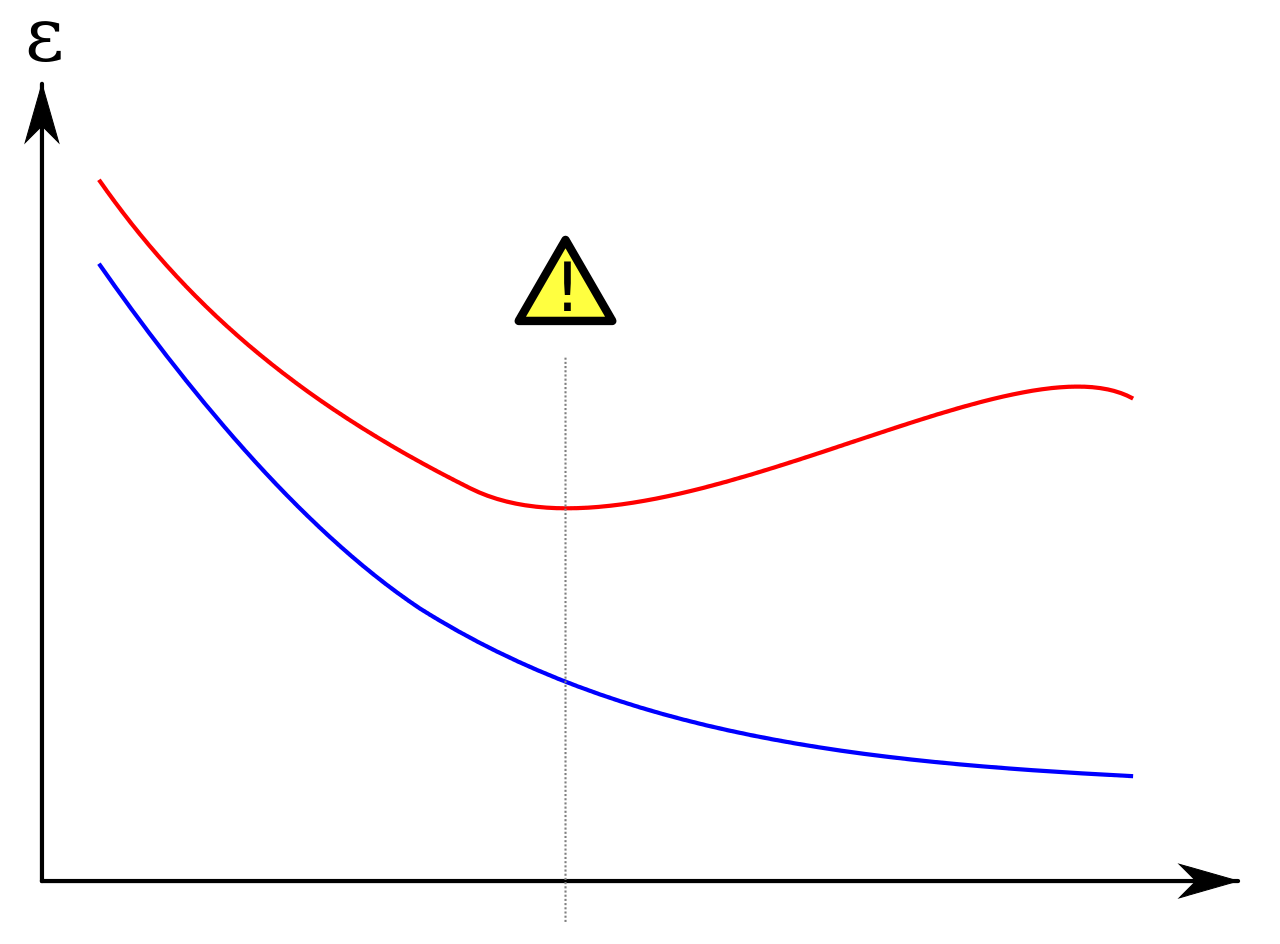

Prevent Overfitting In Gradient Boosting - In gradient boosting, it often. The easiest to conceptually understand is to. In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. In general, there are a few parameters you can play with to reduce overfitting. The objective function combines the loss function with a regularization term to prevent overfitting.

In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. In general, there are a few parameters you can play with to reduce overfitting. In gradient boosting, it often. The easiest to conceptually understand is to. The objective function combines the loss function with a regularization term to prevent overfitting.

In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. The objective function combines the loss function with a regularization term to prevent overfitting. In gradient boosting, it often. The easiest to conceptually understand is to. In general, there are a few parameters you can play with to reduce overfitting.

Gradient Boosting Algorithm Guide with examples

In general, there are a few parameters you can play with to reduce overfitting. In gradient boosting, it often. The objective function combines the loss function with a regularization term to prevent overfitting. The easiest to conceptually understand is to. In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models.

Gradient Boosting Algorithm Guide with examples

In general, there are a few parameters you can play with to reduce overfitting. In gradient boosting, it often. The easiest to conceptually understand is to. The objective function combines the loss function with a regularization term to prevent overfitting. In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models.

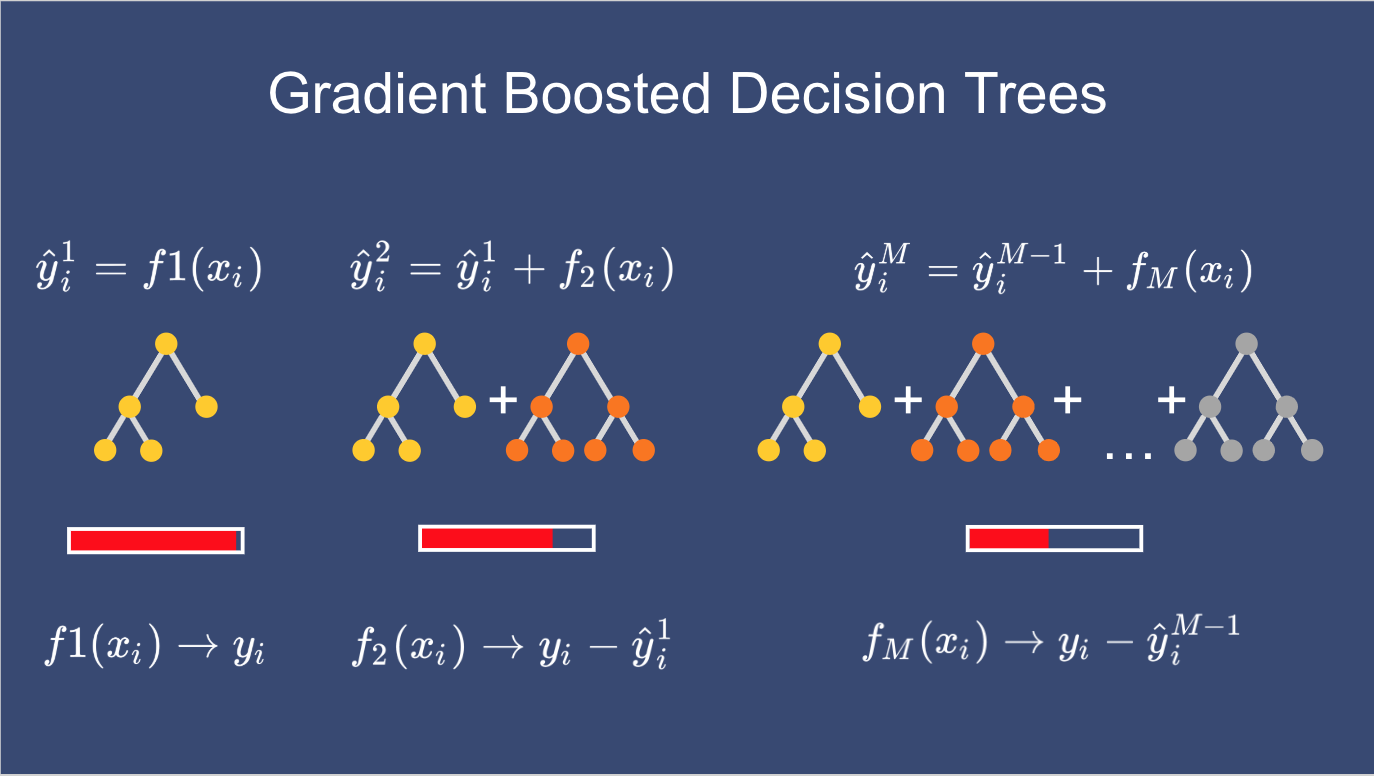

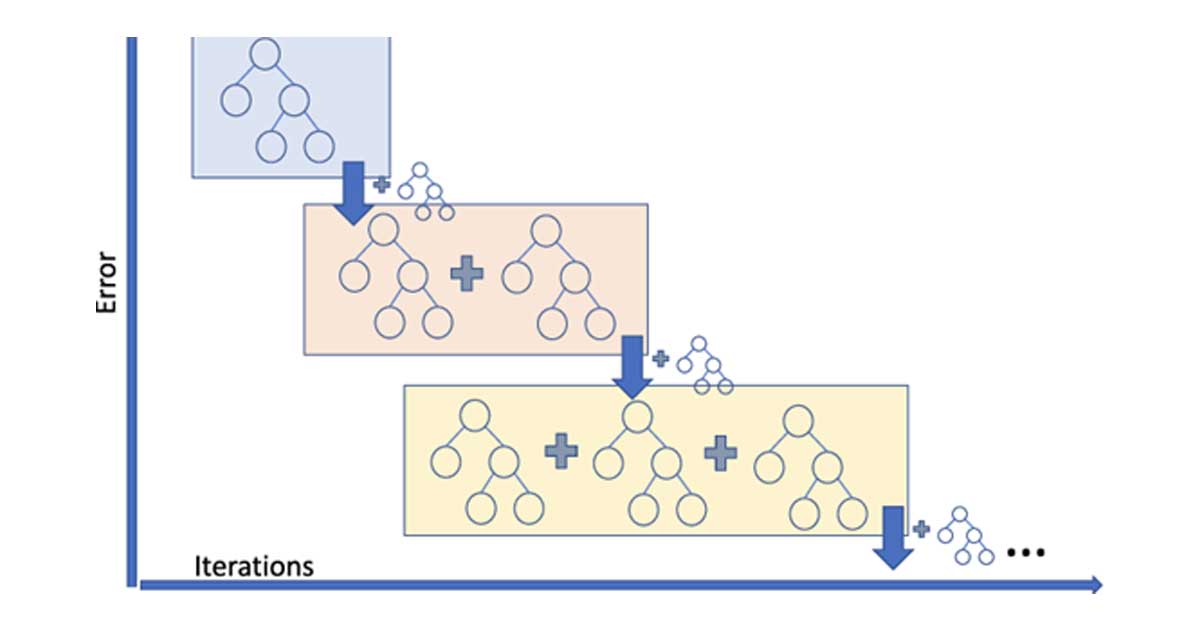

Gradient Boosting

In gradient boosting, it often. In general, there are a few parameters you can play with to reduce overfitting. The easiest to conceptually understand is to. In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. The objective function combines the loss function with a regularization term to prevent overfitting.

Gradient Boosting Algorithm Explained GenesisCube

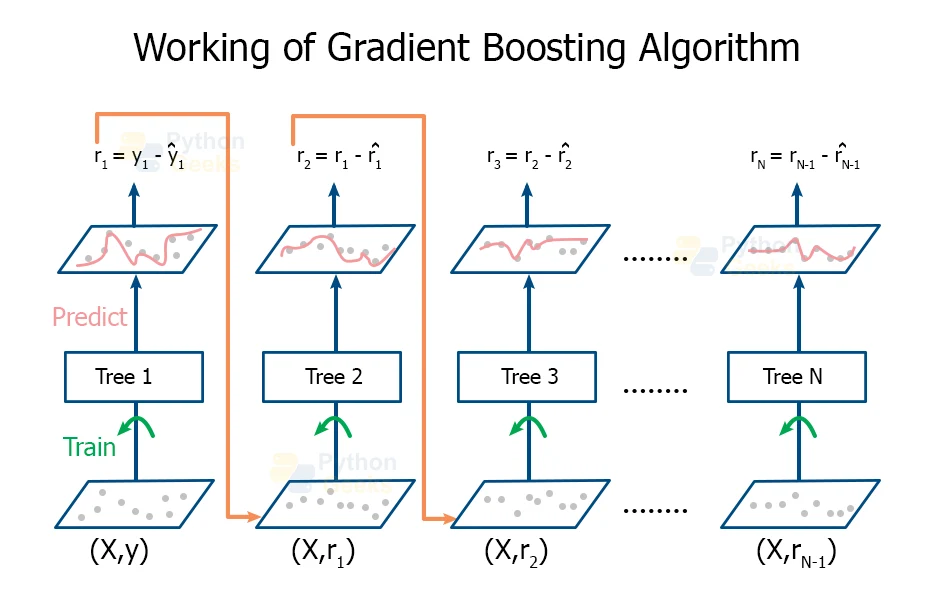

In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. The objective function combines the loss function with a regularization term to prevent overfitting. In general, there are a few parameters you can play with to reduce overfitting. In gradient boosting, it often. The easiest to conceptually understand is to.

Gradient Boosting Algorithm Guide with examples

In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. The objective function combines the loss function with a regularization term to prevent overfitting. In general, there are a few parameters you can play with to reduce overfitting. The easiest to conceptually understand is to. In gradient boosting, it often.

Does gradient boosting overfit The Kernel Trip

In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. The easiest to conceptually understand is to. In general, there are a few parameters you can play with to reduce overfitting. In gradient boosting, it often. The objective function combines the loss function with a regularization term to prevent overfitting.

Mastering The New Generation of Gradient Boosting TalPeretz

The objective function combines the loss function with a regularization term to prevent overfitting. The easiest to conceptually understand is to. In general, there are a few parameters you can play with to reduce overfitting. In gradient boosting, it often. In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models.

Gradient Boosting Definition DeepAI

In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. The objective function combines the loss function with a regularization term to prevent overfitting. In gradient boosting, it often. In general, there are a few parameters you can play with to reduce overfitting. The easiest to conceptually understand is to.

Gradient Boosting Algorithm Explained GenesisCube

The easiest to conceptually understand is to. The objective function combines the loss function with a regularization term to prevent overfitting. In gradient boosting, it often. In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. In general, there are a few parameters you can play with to reduce overfitting.

Gradient Boosting The Ultimate Tool for Advanced Machine Learning

The objective function combines the loss function with a regularization term to prevent overfitting. The easiest to conceptually understand is to. In gradient boosting, it often. In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. In general, there are a few parameters you can play with to reduce overfitting.

In General, There Are A Few Parameters You Can Play With To Reduce Overfitting.

In this article, we’ll explore frequent errors and provide tips for optimizing xgboost models. The objective function combines the loss function with a regularization term to prevent overfitting. In gradient boosting, it often. The easiest to conceptually understand is to.